March 2026: Arena Updates across Product, Leaderboard Rankings & Research

March 2026 brought major updates to the Arena leaderboard, including new rankings across document, video, text, and code models. In this monthly roundup, we break down the best AI models, latest LLM benchmarks, and key trends shaping AI evaluation.

Whether you're a daily voter or just checking in on where the frontier stands, here's what changed and what it means.

New Arenas Launched

Document Arena Goes Live

After an early access period collecting community votes, the Document Arena leaderboard is now live with fully ranked scores powered by real user-uploaded PDFs.

Document Arena top rankings at launch:

| Rank | Model | Score |

|---|---|---|

| #1 | Claude Opus 4.6 | 1525 (+51 pts ahead of #2) |

| #2 | Claude Sonnet 4.6 | — |

| #3 | Claude Opus 4.5 | — |

GPT-5.4 later joined and landed tied at #2 alongside Claude Sonnet 4.6.

You can upload your own PDFs to summarize, extract insights, or ask questions directly—and your votes shape the rankings. Check out the best models for understanding and analyzing documents.

Video Edit Arena Launches

We launched the Video Edit Arena this month, the first leaderboard dedicated to evaluating frontier AI video editing models using real community votes. Thousands of side-by-side comparisons have already been cast.

Video Edit Arena top rankings at launch:

| Rank | Model | Lab |

|---|---|---|

| #1 | Grok-Imagine-Video | xAI |

| #2 | Kling-o3-pro | Kling AI |

| #3 | Kling-o1-pro | Kling AI |

| #4 | Gen4-aleph | Runway |

The AI video editing space is evolving, but still fragmented across providers—no single model dominates yet.

Leaderboard Product Updates

Compare AI Models by Price and Context Window

Arena's leaderboards now display input/output cost per 1M tokens and max context window size directly alongside Arena scores. You can now compare AI models on performance, cost, and context in one view—not just raw rankings.

Customize Your Leaderboard View

Everyone uses AI differently. Arena is built to surface the comparison that matters for your specific use case. You can now customize which columns appear in your Arena leaderboard view, including:

- Rank Spread

- Model Organization

- License

- Total Votes

- Price ($/MToken)

- Max Context

Research & Deep Dives

Arena Max: Intelligent Model Router

This month we did a full deep dive on Arena Max, our intelligent model router that routes your specific prompt to the most capable model with latency in mind. Researchers Derry Xu and Evan Frick broke down why smart routing still matters. Even as frontier models become more capable generalists, performance gaps are more granular than they appear on aggregate leaderboards.

Read more about Max on the blog

The BS Benchmark: Can AI Detect Nonsense?

AI capability lead Peter Gostev tested 80 models with deliberately nonsensical questions to see which ones push back vs. confidently invent fake metrics. One surprising finding: thinking models didn't necessarily perform better on this dimension.

How AI Search Actually Works

Research Engineer Logan broke down why AI search is harder than it looks. The challenge isn't retrieval, it's reasoning about which sources to trust and how to incorporate them.

User Satisfaction Trends Since 2023

We looked back at how often users rated both responses in Battle Mode as "bad," a real-world view of how the frontier has shifted. We traced this back to 2023 across the Top 25 models.

The Nano Banana Origin Story

An anonymous image model appeared on Arena on August 12, 2025, and quickly became the most-voted model in Arena's history. We sat down with Lead Engineer Yue to tell the full story from codename to reveal (it was built on Google Gemini, publicly released August 26, 2025).

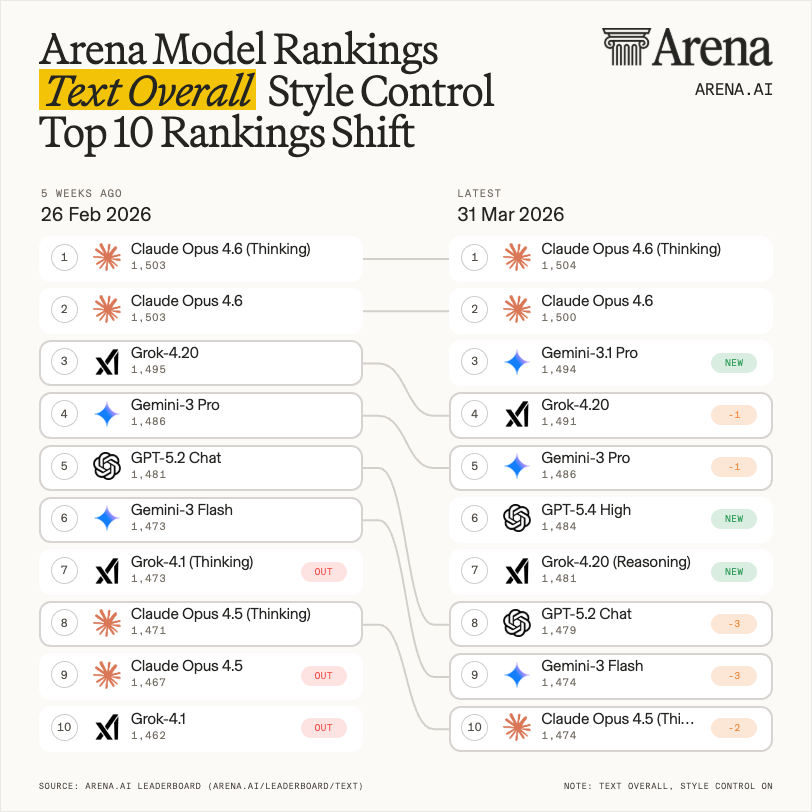

Top Ten Shifts since last Month

How did the Top 10 in Text Arena change in the last month?

Claude Opus 4.6 models remained on top - with new entrants Gemini-3.1 Pro, GPT-5.4 High and Grok-4.20 (Reasoning) landing on 3rd, 6th and 7th places respectively.

Models that have dropped out of the Top 10 in March were Grok 4.1 and Opus 4.5.

Academic Partnerships Program

We're funding independent research in AI evaluation and measurement—up to $50k per project. The Q1 deadline to apply is March 31.

👉 Read more about the program on our blog

New Models on the Leaderboards

For the full list of everything that landed in March, check the leaderboard changelog. Highlights:

- OpenAI GPT-5.4 family — the month's biggest arrival. GPT-5.4, GPT-5.4-High, Mini, Nano, and Mini High landed across Text, Vision, and Code arenas throughout March. GPT-5.4-High reached top 10 Text and top 6 Code; Mini High hit #10 for Arena Expert.

- xAI Grok 4.20 Beta Reasoning — #7 Text Arena, #11 Vision Arena (making xAI a top 5 Vision lab), #28 Code Arena.

- Alibaba Qwen3.5 medium models — top 10 open model in both Text and Code; smaller variants competitive with models 6-7x their size from last gen.

- Alibaba Qwen 3.5 Max Preview — #3 Math, #10 Expert, #15 Text Arena overall.

- Alibaba Qwen-Image-2.0 — now in Image Arena; prior gen Qwen-Image-2512 is currently the #2 open model in Text-to-Image.

- MiniMax M2.7 — #8 in Code Arena and the most cost-efficient of the top 10 at $0.30 / $1.20 per MToken.

- Xiaomi MiMo V2 Pro — top 6 lab in Code Arena, #10 Arena Expert. MiMo V2 Omni also arrived in Vision Arena.

- Microsoft MAI-Image-2 — debuted at #5 in Image Arena with significant gains over MAI-Image-1 across all 7 sub-categories, led by Text Rendering (+115 pts).

- NVIDIA Nemotron 3 Super — #37 among open models in Arena Expert, top 50 open in Text Arena.

- Google DeepMind Gemini 3.1 Flash Lite Preview — #36 Text Arena, most efficient Gemini 3.1 at $0.25 in / $1.50 out per MToken.

- PixVerse V5.6 — #15 in both Text-to-Video and Image-to-Video.

- Runway Gen-4.5 — tied #15 in Text-to-Video, on par with Kling-2.6-Pro

- Grok 4.20 Beta Reasoning — #7 for Search Arena, #11 for Text Arena, #22 for Vision Arena

Every ranking on Arena is powered by real-world human votes in anonymous side-by-side comparisons. Come test the latest models, vote, and help shape where the frontier goes next.