New Categories for Web Development in Code Arena

AI coding models are increasingly used to build web apps, but aggregated leaderboards obscure key performance differences. After analyzing 250k+ Code Arena prompts, we identified major front-end task categories and built new leaderboard views to compare model strengths and weaknesses.

Code Arena has evolved rapidly alongside model capabilities. Early interactions often involved single-file HTML pages or small frontend components. Today, users ask models to build multi-file React applications, implement dashboards, develop consumer products, create browser games, simulate dynamic systems, and build editing tools.

The evaluation setting has also changed. Models increasingly operate with tools for file editing, execution, web access, and media understanding. As a result, Code Arena is no longer only measuring whether models can write valid code. It is measuring whether models can complete diverse, product-oriented development tasks under realistic conditions.

This shift makes a single global leaderboard less sufficient. Web development tasks vary substantially in intent and required capability: a polished landing page, a data-heavy admin dashboard, a browser game, and a physics simulation stress different model behaviors. Category-specific leaderboards provide a more interpretable way to measure these differences.

Building the Taxonomy

We analyzed more than 250,000 filtered Code Arena prompts collected over five months, focusing on web development tasks. We used clustering analysis to identify recurring patterns in user intent and task structure, then refined the resulting groups through an iterative taxonomy-building process. The refinement process optimized for four criteria:

- Interpretability: Categories should be understandable to users, researchers, and model developers. Each category should describe a recognizable type of web development task or a representative user intent.

- Coverage: The taxonomy should capture a broad share of real user behavior in Code Arena.

- Statistical robustness: Each category should contain enough examples to support reliable leaderboard estimates.

- Boundary clarity: Categories should distinguish meaningful differences in task intent and model capability, while still allowing overlap when prompts are naturally multi-domain.

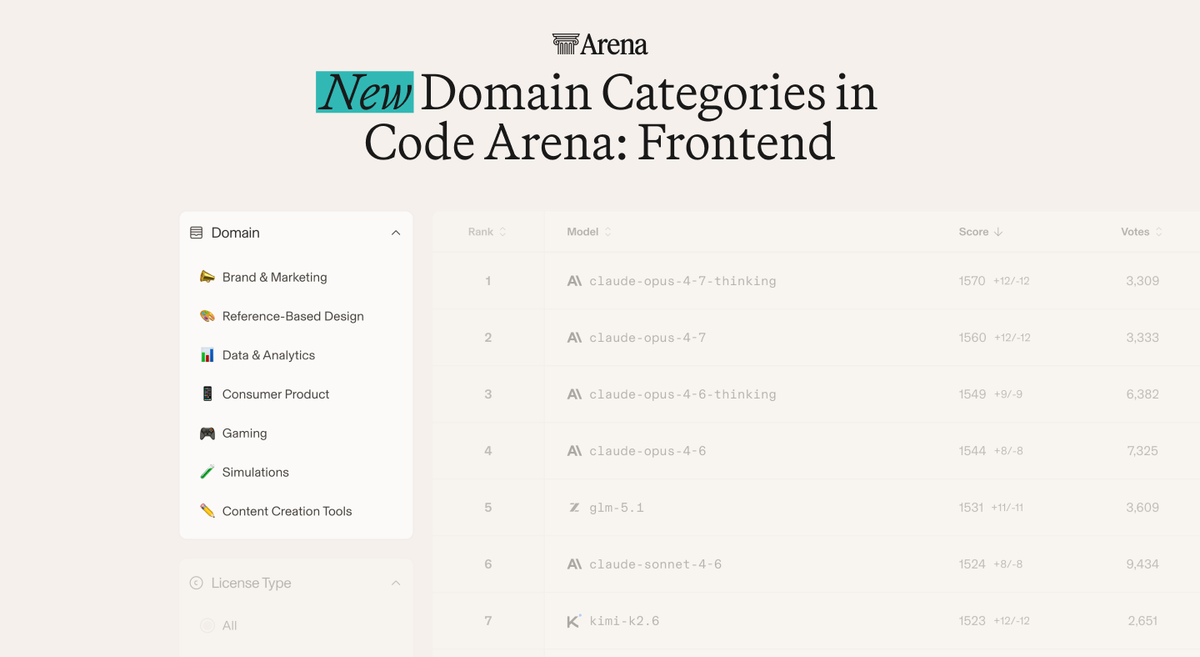

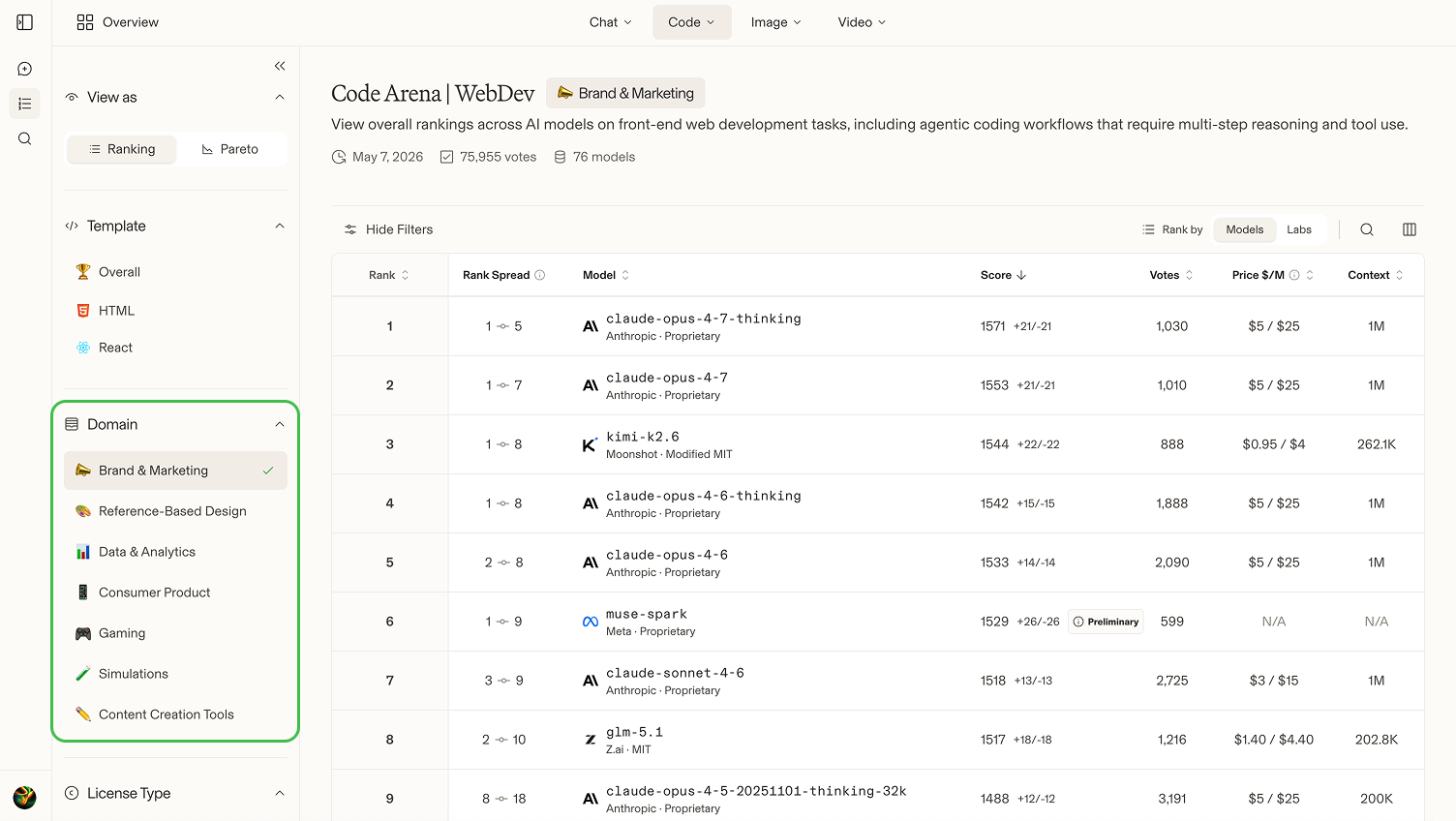

Categories in Code Arena WebDev

Categories are the primary way we organize prompts in Code Arena WebDev. Each prompt can be tagged with one or more categories, since real web development requests often mix several intents in one task.

When you view a category leaderboard, you are seeing the same evaluation methodology as the main Code Arena WebDev leaderboard, filtered to a specific prompt domain. This gives a clearer view of how models perform on different kinds of web development work.

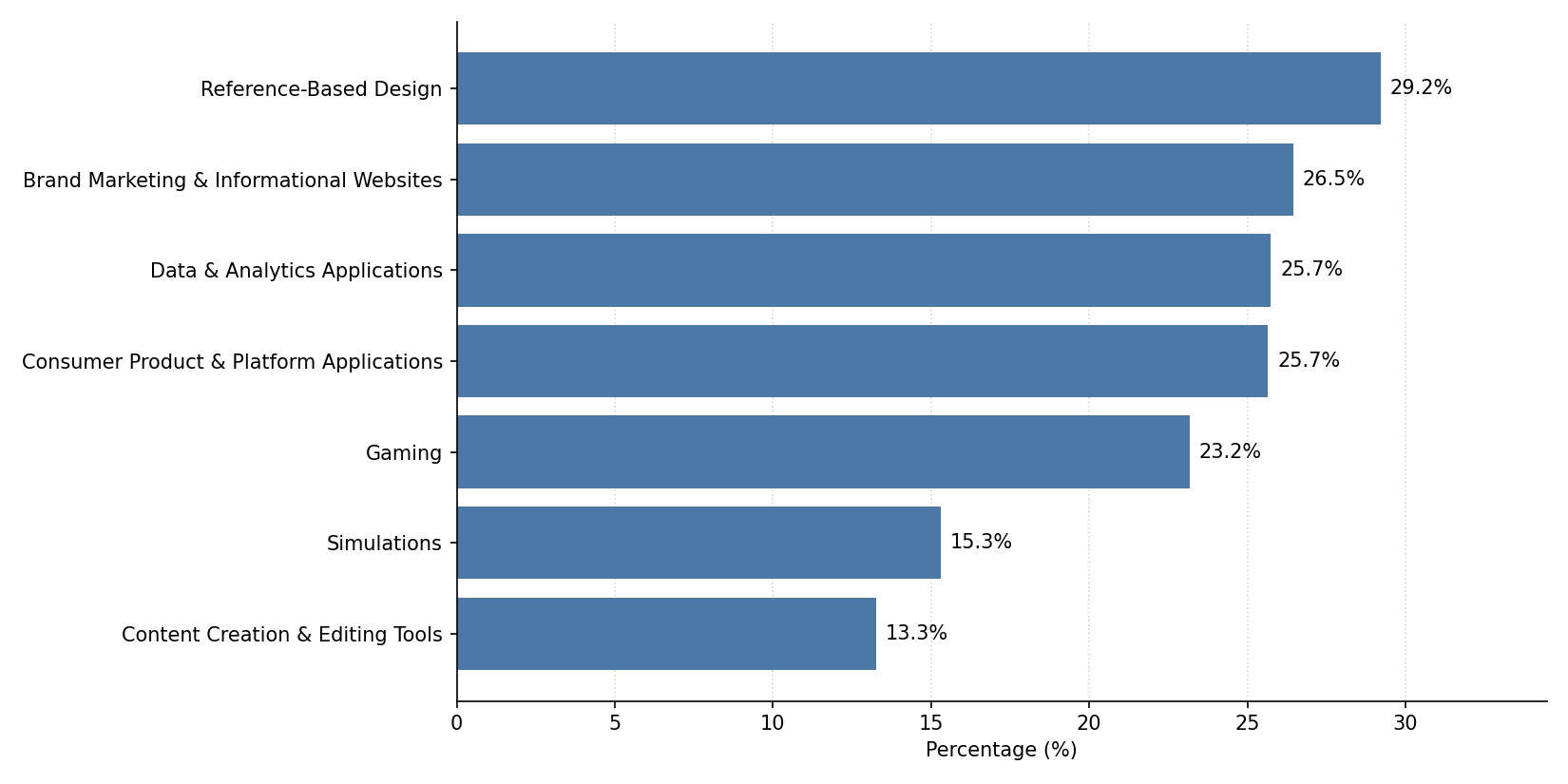

We introduce seven categories for WebDev based on the data we analyzed. These categories cover a wide range of user intent, from polished marketing websites to games, simulations, dashboards, and editing tools. Their frequencies vary across the dataset: Reference-Based Design is the most common at roughly 29%, while more specialized categories such as Simulations account for about 15.3%. Because categories are not mutually exclusive, prompts can overlap. For example, a reference-based dashboard may fall under both Reference-Based Design and Data & Analytics Applications.

Brand, Marketing & Informational Websites

This category includes prompts for websites that present information, products, brands, people, events, or organizations. It covers landing pages, company sites, portfolios, blogs, documentation sites, launch pages, etc.

An example from our user:

Create a Padel-Animation (the sport commonly referenced as a mix between squash and tennis) on the main website.

Reference-Based Design

This category includes prompts that ask for a website or app inspired by a known product, company, website, or interface. These prompts often test whether models can capture a recognizable layout, interaction pattern, or visual style.

An example from our user:

Build a Windows 95 desktop with draggable windows, start menu, taskbar with clock, desktop icons, and proper z-index stacking.

Consumer Product & Platform Applications

This category includes user-facing apps where people browse, communicate, shop, book, post, transact, or consume content. It covers marketplaces, social apps, chat apps, booking products, streaming apps, e-commerce sites, and similar platforms.

An example from our user:

create a google chrome extensions, make a fully animated cyberpunk UI for whole Google Drive, include a Gmail addon to make the important email highlighted in one group .Add a button to download full extension file .zip for upload in store .Also make a editor to select the neon light colors, adjust the brightness

Gaming

This category includes browser games and gameplay-first experiences. These prompts usually involve rules, objectives, player actions, scoring, levels, obstacles, or win/loss conditions.

An example from our user:

Create a chess game with legal move highlighting, piece capture, check/checkmate detection, move history, and optional computer opponent

Simulations

This category includes interactive models where users change inputs and observe how a system behaves. It covers physics demos, educational simulations, scientific models, financial calculators, engineering models, and other explorable systems.

An example from our user:

Create a web app that allows me to visualize the first and second derivative of an original function. there can be some presets but also a way for me to input my original function or the first or second derivative function. then the graphs would appear correctly showing the derivatives. i can drag a little point on the graph and see where it coresponds to on the other graphs. make it simple and easy to use and fit everything onto the screen with no scrolling needed.

Content Creation & Editing Tools

This category includes tools for creating, generating, customizing, or editing an output artifact. Examples include SVG generators, logo makers, drawing tools, diagram editors, image editors, layout builders, and design tools.

An example from our user:

Create a color theory app with interactive wheel, harmony demonstrations, contrast checker, and colorblind simulator.

Prompt Category Distribution Shifts over Time

Category-level leaderboards give us a clearer signal for understanding how web development use cases are evolving in Code Arena. By analyzing prompt distribution across categories over time, we can see that practical, real-world tasks — such as brand marketing and informational websites, data and analytics applications, and consumer product and platform applications are becoming a larger share of web development prompts over time.

At the same time, categories like gaming and simulations are declining in relative share, yet their absolute volume may remain meaningful as overall usage grows. This distinction is important: category-level views help separate changes in user demand from changes in total platform volume.

Together, these trends show why category-specific leaderboards are valuable. They do not just break down model performance more precisely; they also help track how real user needs shift over time, making the leaderboard more aligned with the applications people are actually building.

Illustration of Category Leaderboards

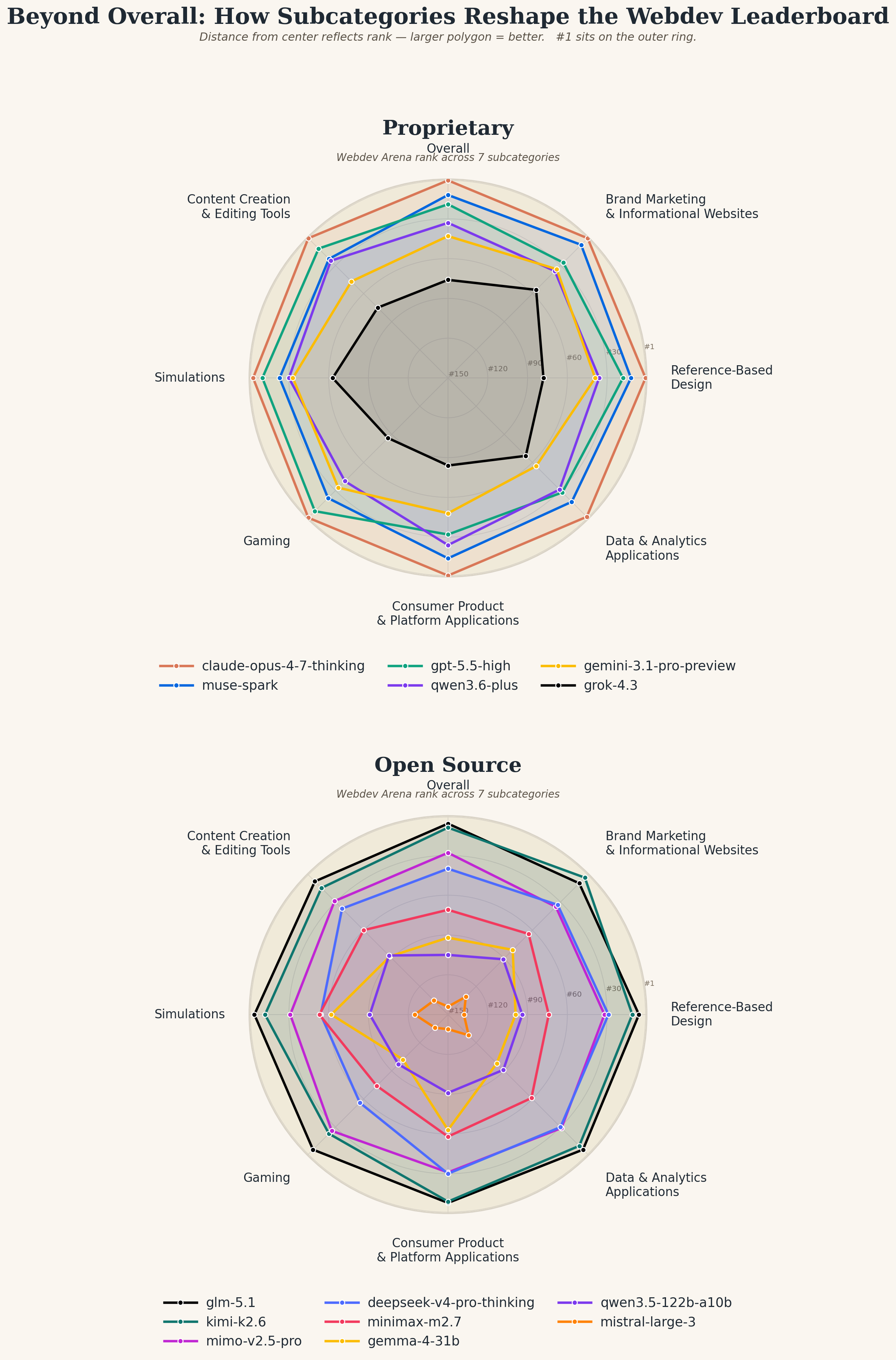

Category leaderboards reveal how much model rankings can change once web development is broken into concrete use cases. To illustrate this, we compare flagship models from several leading labs using both their overall Webdev Arena rank and their ranks across seven web development categories using the most recent leaderboard snapshot.

Among proprietary models, Claude Opus 4.7 Thinking shows broad strength across categories, with consistently high rankings throughout the radar plot. GPT-5.5 High also performs strongly overall, with especially visible strengths in interactive tasks such as simulations and gaming. Muse-Spark from Meta stands out in practical website and product-building scenarios, including brand marketing and informational websites, reference-based design, and consumer product and platform applications.

The open-source leaderboard shows a similarly rich picture. GLM-5.1 and Kimi-K2.6 both achieve strong category coverage, while their shapes highlight different areas of emphasis across web development tasks. Google’s Gemma-4-31B also shows why category views are useful: its profile is especially competitive in consumer product and platform applications relative to several other categories, making its strengths easier to see than in an aggregate ranking alone. Other open-source models also show meaningful category-level strengths, such as Mimo-V2.5 Pro in creative and interactive categories including gaming, simulations, and content creation and editing tools.

Looking Ahead

Code Arena is still evolving quickly. As models improve and tools evolve, we expect the category taxonomy to evolve as well. These seven categories provide a clearer way to understand how models perform across the kinds of web development tasks users actually ask for. Future directions include introducing finer-grained subcategories, analyzing tool-use patterns, and breaking down performance by interaction complexity, execution success, and visual quality.